Collaboration in Mixed Reality

Details are updated when publicly available. Contact me for more information.

( Ongoing )

My dissertation focuses on Mixed Reality (MR) mediated remote collaboration and includes foundational HCI research as well as the design and evaluation of healthcare-specific systems. I study how MR can help us go "beyond being there" and make communicating with each other over distances easier and more effective.

In particular, I work on collaboration and remote guidance over physical tasks where individuals work together to perform actions in the real world (like performing surgery). My research explores the impact spatial affordances of immersive technology have on how people make sense of remote environments, share information, and collaborate with others in real-time. I then use this understanding to design interaction methods and different ways of representing the environment and collaborators to ease sensemaking and enhance the richness of communication without the need for complete realism.

The dissertation spans a few projects:

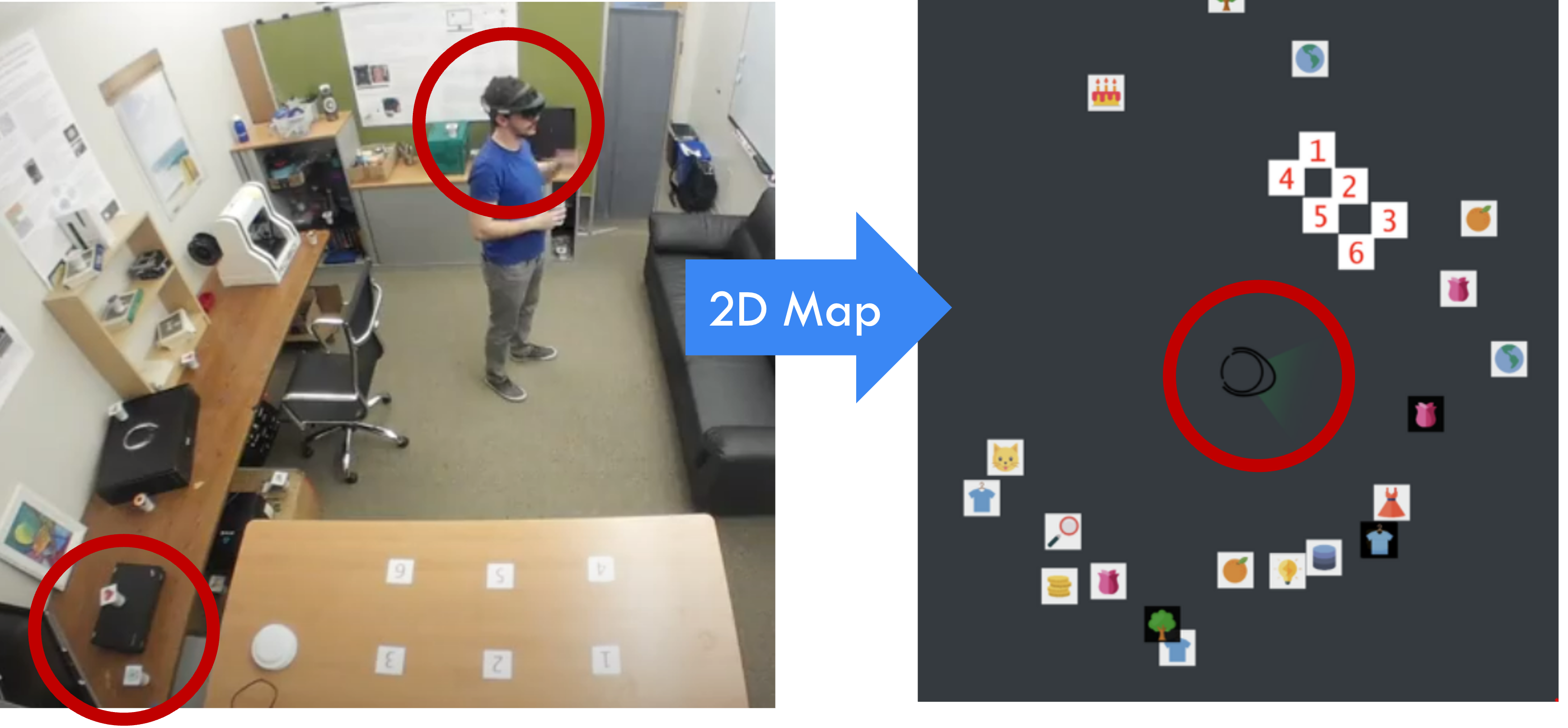

Project MaLi > Uncovering the effects of spatial information and automated guidance to task objects.

This project focuses on referencing - the ability to refer to an object in a way that is understood by others. Being crucial for communication during physical tasks, we evaluated two ways of easing the process of referencing in a 2x2 mixed factorial experiment. We also dive into how aspects of referencing can be offloaded to reduce collaborators' need for spatial information. This work is pulished at CHI 2021 (Read the paper, Watch the talk).

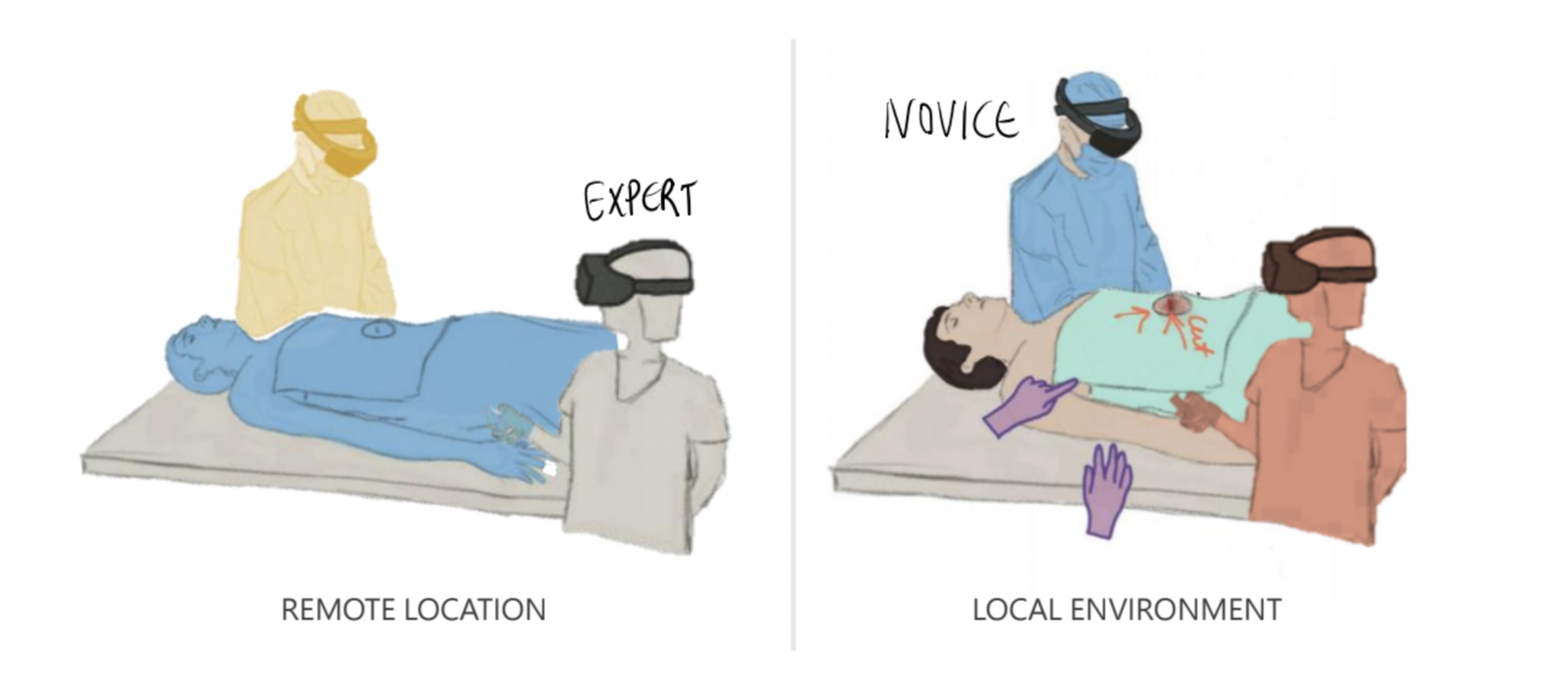

ARTEMIS > Designing and evaluating an XR remote guidance system for open surgery and trauma care.

ARTEMIS is a mixed-modality collaborative system geared towards facilitating remote guidance for surgery and trauma care. It was designed and developed in collaboration with the Naval Medical Center at San Diego and evaluated using cadaver studies for a number of trauma procedures. This work is published at CHI 2021 and has been accepted at the journal Surgery (go to project page).

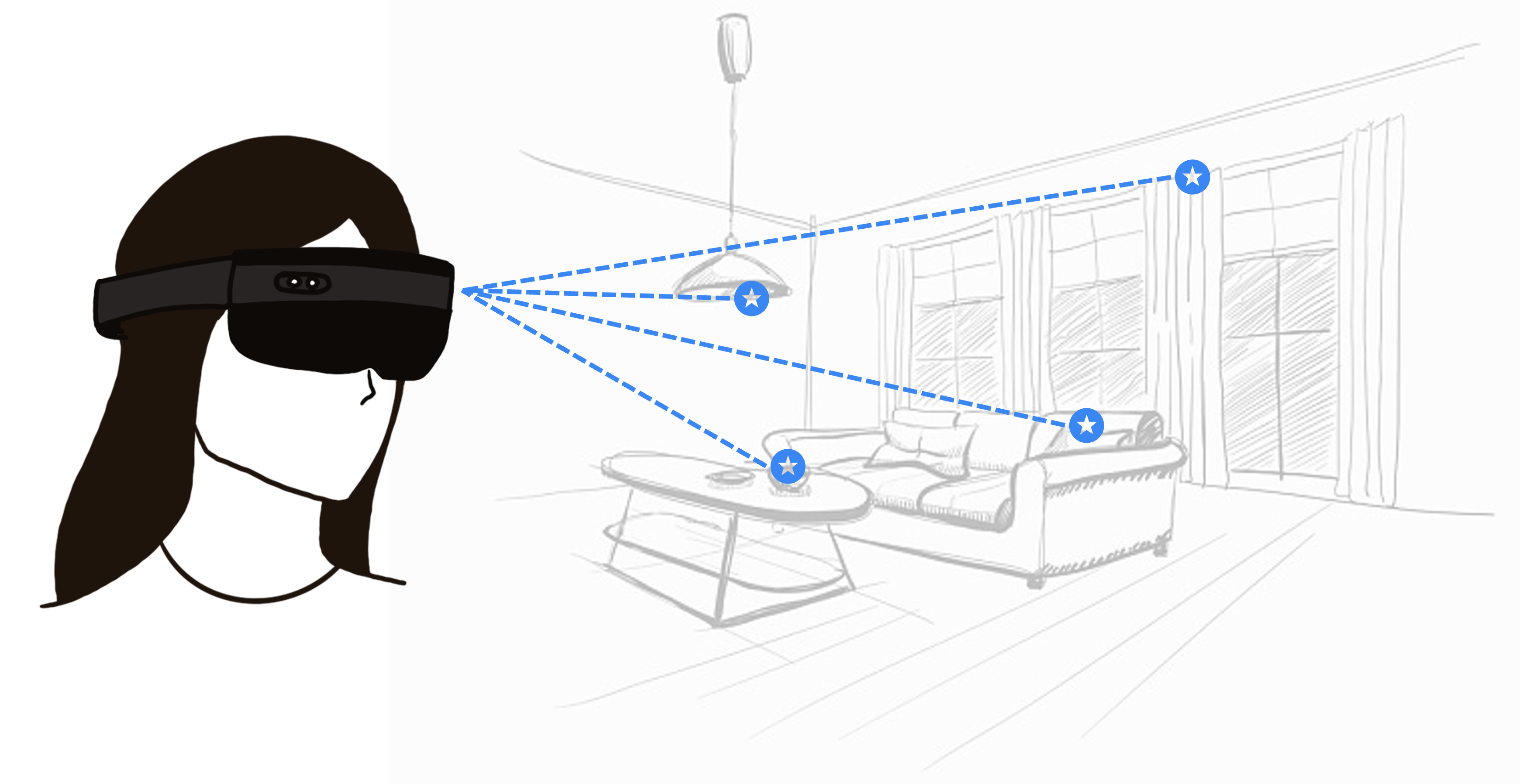

HoloCPR > Designing and evaluating an MR interface for time-critical emergencines.

HoloCPR is an MR application providing real-time CPR instructions for novices through a combination of visual and spatial cues. The presentation of the instructions were designed specifically for time-critical use and the limited field-of-view of today's headsets. HoloCPR was evaluated against screen-bound instructions during a lab-based resuscitation scenario. This work is published at Pervasive Health 2018 (go to project page).

UnMapped > Exploiting expert's knowledge in collaborative XR. (Details to come).

XR Collaboration Model > A communication-and-cognition focused conceptual model and review for XR collaboration.(Details to come).

Publications:

Janet G Johnson, Tommy Sharkey, Iramuali Cynthia Butarbutar, Danica Xiong, Ruijie Huang, Lauren Sy, and Nadir Weibel. UnMapped: Leveraging Experts' Situated Experiences to Ease Remote Guidance in Collaborative Mixed Reality. To Appear at CHI 2023 [Quick Teaser Video].

Janet G Johnson, Danilo Gasques, Tommy Sharkey, Evan Schmitz, and Nadir Weibel. Do You Really Need to Know Where “That” Is? Enhancing Support for Referencing in Collaborative Mixed Reality Environments. CHI '21, Yokohama, Japan. [Video, Slides]

Danilo Gasques, Janet G Johnson, Tommy Sharkey, Yuanyuan Feng, Ru Wang, Zhuoqun Robin Xu, Enrique Zavala, Yifei Zhang, Wanze Xie, Xinming Zhang, Konrad Davis, Michael Yip, and Nadir Weibel. ARTEMIS: A Collaborative Mixed-Reality System for Immersive Surgical Telementoring. CHI '21, Yokohama, Japan. [Video, Slides]

Janet G. Johnson, Danilo Gasques Rodrigues, Madhuri Gubbala, and Nadir Weibel. HoloCPR: Designing and Evaluating a Mixed Reality Interface for Time-Critical Emergencies. PervasiveHealth '18, New York, NY USA. [Slides]

Collaborators:

Tommy Sharkey, Evan Schmitz, Danilo Gasques, Cynthia Butarbutar, Danica Xiong, Ren Sy, Nadir Weibel, Mark Billinghurst